Immersive Systems and Sensory Stimulation Laboratory

This Lab of the Institute of Systems and Robotics, is led by Prof. Paulo Menezes, and was created in 2019 with a focus on the Human as the centre of New Interaction Paradigms.

If you are looking for Affective Computing and Interaction, Behaviour and Intention Analysis, Augmented/Virtual and Mixed Reality, or Neural Computing, you are at the right place.

Move In Tempo - Maria Rita Nogueira's work was exhibited in Seoul as part of the International Exhibition "Color 2022" at the Czong Institute for Contemporary Art (CICA) Museum in September 2022, after being exibited in Museu Nacional Machado de Castro, and at CRIATECH 2021, where she received the prize for Artistic Residency.

Augmented Reality

Developing Augmented Reality Support and Applications since 2005.

Augmented reality has a great potential for a broad set of application cases. The possibility of providing information and guidance during task execution for industrial, medical, exploration, and many other scenarios may reduce the overall times and the number of human errors that typically occur. Health, education, and entertainment are also potential targets.

With us...

IS3L is focused on Real Problems of Real People in the Real World

Looking for a place to develop skills for your future career, or searching for a solution for your problem?

Creativity

As a student or collaborator your ideas are always welcome to make our projects even better.

Multi Disciplinary

Learning and contributing to other scientific and technical areas is part or our mission.

Research and Development

Targetting real world problems we aim at contributing to a better quality of life for all.

Technology Transfer

IS3L collaborates with industrial companies

We work with industry for turning concepts into solutions or products.

Affective Interaction

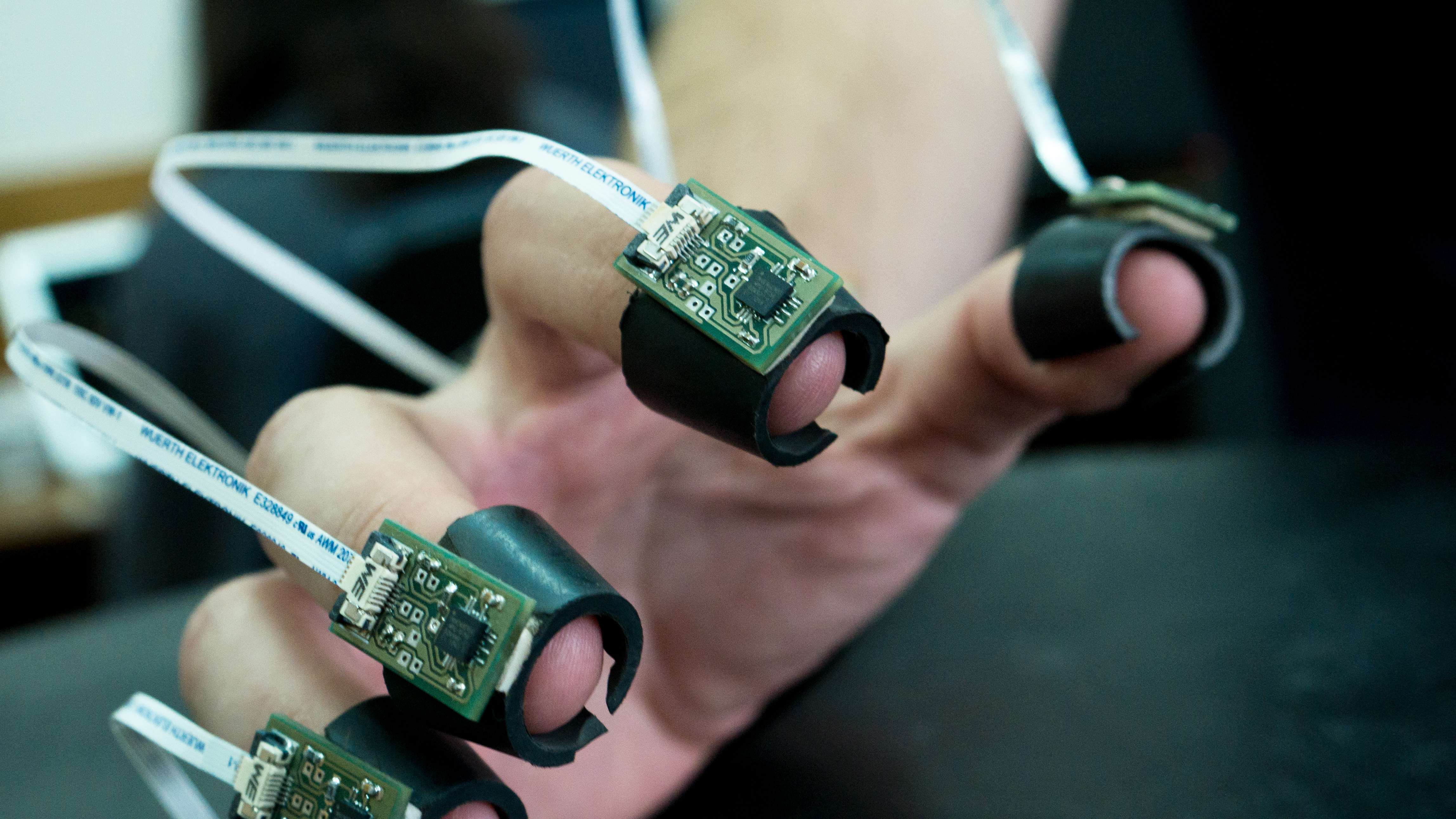

As humans are driven by emotions, interactive systems and robots must comply with that.

Interactive Systems must learn to adapt to users, understand they their emotions and even be reciprocal in exhibiting emotions to develop some level of empathy. You are invited to participate in our study and help teaching a robot on how to express emotions.

Interation with the Focus on the User

----- Empathic Robots

Neural Computing

Deep Neural Networks are unlocking complex problems every day.

Besides exploiting existent architectures in new application fields, we are exploring new biologically inspired structures.

Joining in

Prospective Students

Check the Opportunities Page or contact Prof. Paulo Menezes.

Expert People

Passionate People on Our Team

Every PhD or MSc student on our team is passionate by what he/she does. Meet them to learn about their subjects and enthusiasm.